- Software Letters

- Posts

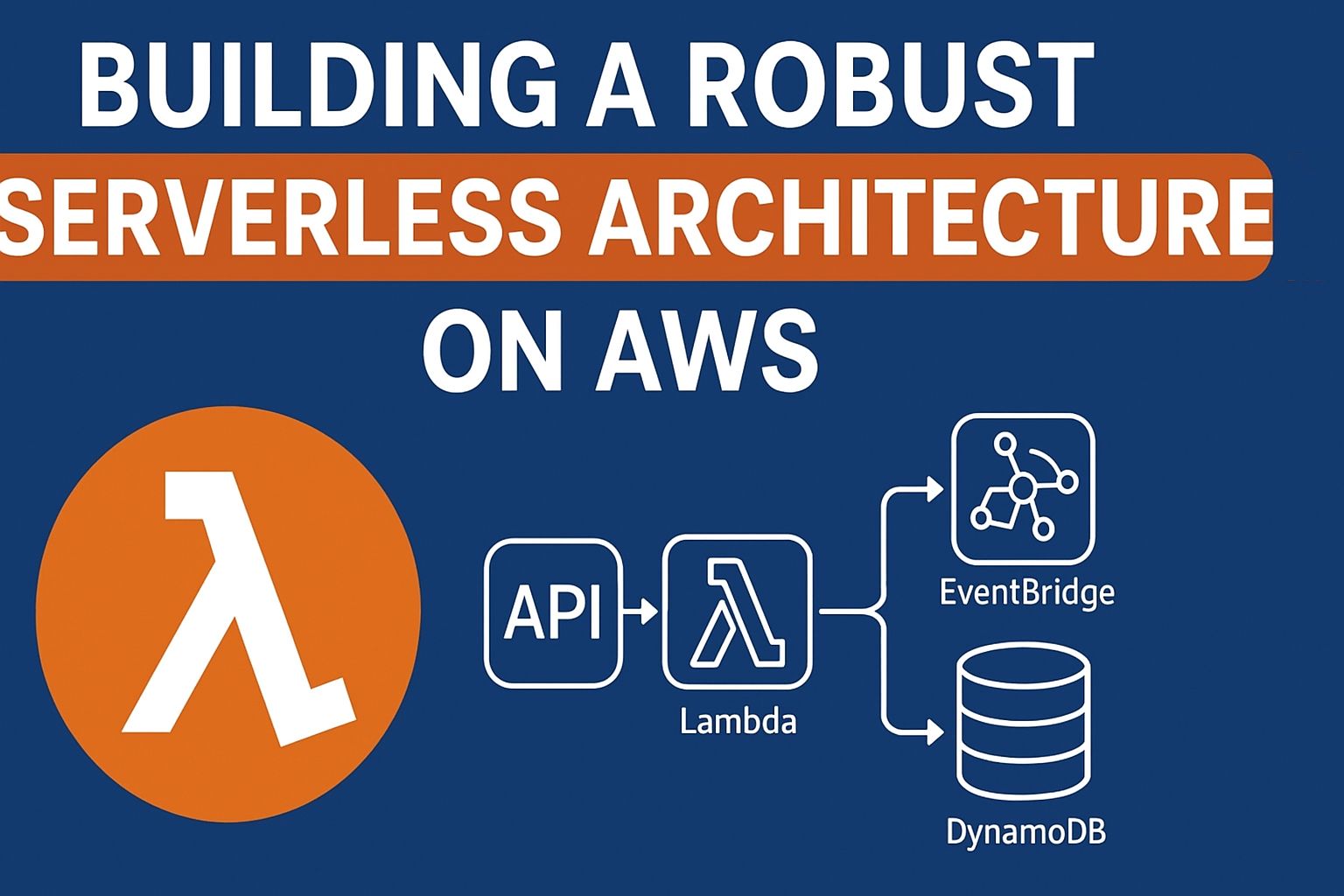

- Building a Robust Serverless Architecture on AWS

Building a Robust Serverless Architecture on AWS

The hidden pitfalls of serverless on AWS — and how to design resilient, event-driven systems without falling into common traps.

Introduction

“Serverless” often gets marketed as magic: no servers, infinite scale, zero ops. But anyone who has built a production system on AWS Lambda knows the truth: serverless doesn’t remove complexity—it shifts it.

You’re no longer patching EC2 instances at 3 a.m. Instead, you’re designing around cold starts, IAM policies, distributed failures, and service limits.

In this article, we’ll dive into the technical side of serverless architecture on AWS: the core building blocks, patterns that work, pitfalls to avoid, and a real code sample to ground it all.

1. Core AWS Building Blocks

A production-grade serverless system typically involves:

AWS Lambda → event-driven compute

Amazon API Gateway → API endpoints (REST/HTTP/WebSocket)

Amazon DynamoDB → scalable NoSQL store

Amazon SQS / SNS → queues and pub/sub for decoupling

Step Functions → workflow orchestration

EventBridge → central event bus

Each of these services solves a piece of the puzzle—but each also comes with constraints (timeouts, throughput, pricing quirks).

2. Event-driven as the Foundation

The most reliable serverless architectures are event-driven.

Instead of direct, synchronous calls, you design flows:

An S3 file upload → triggers a Lambda → publishes a message to SQS → another Lambda processes the queue.

Or, An item added into DynamoDB → Stream Dynamo → triggers a Lambda.

Each component is atomic, stateless, and independently scalable.

👉 Best practice: decouple functions with events to absorb traffic spikes and contain failures.

3. Technical Challenges

a) Cold Starts

A Lambda that hasn’t been invoked recently may take 200–800 ms to initialize.

Bad for latency-sensitive APIs.

Solutions: provisioned concurrency, minimizing package size, or moving critical endpoints to containers (Fargate/ECS).

b) Error Handling in Distributed Systems

Errors don’t cascade like in monoliths—they vanish unless handled.

Use Dead Letter Queues (DLQ) with SQS.

Configure retries + exponential backoff.

Centralize observability with CloudWatch + X-Ray or Datadog.

c) Observability

Without proper tracing, debugging is hell.

Enable X-Ray to follow event flows.

Use correlation IDs across services.

Log business metrics (not just technical ones).

4. Cost Management

Serverless isn’t always cheaper.

A busy Lambda can cost more than a reserved EC2 instance.

DynamoDB on-demand billing can spike under sudden load.

👉 Best practice:

Set budgets + alarms in AWS.

Switch to provisioned capacity when workloads are predictable.

Tune Lambda memory for the sweet spot of performance vs. cost.

5. Architecture Patterns

Pattern 1: API Gateway + Lambda + DynamoDB

Use case: CRUD-style applications, lightweight APIs, microservices.

How it works:

Client sends an HTTP request to API Gateway.

API Gateway triggers a Lambda function.

Lambda executes business logic and persists/retrieves data in DynamoDB.

Response flows back to API Gateway → client.

Example flow:

POST /items→ Lambda → DynamoDB → returns item ID.GET /items/{id}→ Lambda → fetch item → return JSON.

Strengths:

Fully managed, zero infra ops.

Auto-scaling, pay-per-use.

DynamoDB offers single-digit millisecond latency even at scale.

Challenges:

Lambda cold starts may add latency for APIs (mitigated with provisioned concurrency).

DynamoDB requires careful schema design: poor partition key choices can cause hot partitions.

Limited transaction support compared to SQL databases.

When to use:

SaaS backends, MVPs, mobile apps.

Systems with unpredictable or spiky traffic.

When not to use:

Heavy relational queries, joins, or reporting workloads → use Aurora Serverless instead.

Pattern 2: EventBridge + Step Functions

Use case: Orchestrating complex business workflows, stateful processes.

How it works:

An event (e.g., “New User Created”) is published to EventBridge.

EventBridge routes it to a Step Functions state machine.

Step Functions executes a sequence (or parallel set) of Lambdas or service integrations.

Example: validate user → send welcome email → provision resources → log analytics.

Each step is tracked, retried on failure, or rolled back as defined.

Example flow (User Onboarding):

Event:

user.createdStep 1: Verify email with Lambda.

Step 2: Create a record in DynamoDB.

Step 3: Trigger SES email for welcome.

Step 4: Notify analytics pipeline.

Strengths:

Visual workflows make it easier to understand long processes.

Retry/backoff policies are declarative, no custom code needed.

200+ AWS service integrations without writing glue code.

Excellent for compliance/audit since each state is logged.

Challenges:

Step Functions pricing is per state transition → can get costly if poorly designed.

Debugging across multiple retries requires observability discipline.

Risk of “orchestration bloat” if too much logic is pushed into the state machine.

When to use:

Multi-step processes with dependencies (KYC checks, ETL pipelines, order fulfillment).

Anywhere you’d normally build a “workflow engine.”

When not to use:

Ultra-simple linear flows (one Lambda is enough).

High-frequency, ultra-low-latency systems (state machines add overhead).

Pattern 3: S3 + Lambda + SQS

Use case: File ingestion, media processing, batch pipelines.

How it works:

A file is uploaded to S3 (e.g., CSV, image, video).

S3 triggers a Lambda to process metadata.

If processing is heavy, Lambda pushes a job reference into SQS.

Worker Lambdas consume SQS messages in parallel to process files asynchronously.

Results stored in DynamoDB, S3, or another downstream system.

Example flow (Video Processing):

User uploads

video.mp4→ S3 event triggers Lambda.Lambda stores job metadata in DynamoDB + pushes job to SQS.

Worker Lambdas pick messages, call AWS MediaConvert for transcoding.

Another Lambda updates DynamoDB when processing is done.

Strengths:

Asynchronous, decoupled, scalable.

SQS absorbs spikes in traffic—no function overload.

Cheap storage in S3, reliable delivery guarantees in SQS.

Challenges:

Requires idempotent processing (files may trigger retries).

Message visibility timeouts in SQS must be tuned carefully.

Monitoring + tracing is harder in async systems.

When to use:

ETL pipelines, image/video processing, IoT ingestion.

High-volume batch processing where workloads can be distributed.

When not to use:

Latency-sensitive flows (async means seconds, not ms).

Very small workloads that don’t justify pipeline complexity.

Pattern Comparison

Pattern | Best For | Latency | Complexity | Cost Model | Risk |

|---|---|---|---|---|---|

API Gateway + Lambda + DynamoDB | CRUD APIs, microservices | Low (50–300 ms) | Low | Pay per request | Schema design mistakes |

EventBridge + Step Functions | Business workflows | Medium (seconds) | Medium-High | Pay per transition | State machine bloat |

S3 + Lambda + SQS | File ingestion, pipelines | Medium-High (seconds-minutes) | Medium | Pay per invocation + storage | Retry storms, idempotency |

👉 With these patterns, you can cover 80% of real-world serverless use cases on AWS. The art is knowing when to apply which—and when not to.

6. Anti-patterns in Serverless Architecture

Even with AWS’s powerful primitives, it’s easy to design a system that looks “serverless” but fails at scale or becomes unmaintainable. Here are the most common traps — and how to avoid them.

❌ 1. Fat Lambdas (a.k.a. Monolithic Functions)

What happens:

A single Lambda function contains all the business logic for multiple features (user signup, payments, notifications).

The codebase grows bloated, deploy times skyrocket, and every change risks breaking unrelated functionality.

Why it’s bad:

Longer cold start times (big packages = slower initialization).

Difficult to test, hard to isolate failures.

Violates the single responsibility principle.

Real-world example:

A team puts their entire CRUD backend into one Lambda behind API Gateway. At first, it works fine. Six months later, the function has 5,000+ lines of code, deployment takes 5 minutes, and debugging one bug means redeploying everything.

Better approach:

Split by domain/functionality:

CreateUserFunction,ProcessPaymentFunction,SendEmailFunction.Use AWS SAM/Serverless Framework to manage multiple Lambdas as a single project.

Keep packages small; externalize heavy dependencies into layers.

❌ 2. Synchronous Lambda-to-Lambda Calls

What happens:

One Lambda calls another directly (via

boto3.invoke()or HTTP call through API Gateway).

Why it’s bad:

Increases latency — each hop adds milliseconds.

Creates tight coupling — failure in one function cascades upstream.

Hard to debug chains of synchronous calls.

Real-world example:

A team builds an order service where ValidateOrderLambda calls ChargePaymentLambda, which calls SendReceiptLambda. During a payment outage, every Lambda fails in a chain, and debugging the failure requires tracing multiple logs.

Better approach:

Use asynchronous decoupling via SQS, EventBridge, or Step Functions.

Functions should communicate through events, not direct calls.

Reserve synchronous calls only for true request-response APIs.

❌ 3. Mixing Async and Sync Without a Strategy

What happens:

Some flows are synchronous (API calls), others are async (events), but there’s no clear design.

A synchronous API depends on an async downstream system (e.g., an S3-triggered Lambda), causing unpredictable behavior.

Why it’s bad:

Client requests may hang or fail while waiting on async tasks.

Messages may be lost if the async system retries/fails and the sync caller doesn’t know.

Creates “half-event-driven, half-HTTP” spaghetti.

Real-world example:

An upload API accepts a file, stores it in S3, then waits for a Lambda to process it asynchronously before returning. Sometimes it works; sometimes the Lambda lags or retries, and the client times out.

Better approach:

Define boundaries: APIs handle fast, synchronous tasks only.

Push heavy/long tasks into asynchronous pipelines (S3 + SQS + Lambda).

If you need sync + async together → design with callbacks or polling.

❌ 4. Assuming Multi-region Resilience by Default

What happens:

Teams assume “it’s AWS, so it’s automatically global and resilient.”

They deploy only to

us-east-1and think they’re safe.

Why it’s bad:

Serverless services are regional by default. If

us-east-1goes down, your API, Lambdas, and DynamoDB all go with it.Data residency laws may also require multi-region data storage.

Real-world example:

A fintech app deployed fully serverless in us-east-1. During a regional outage, payments and APIs went offline for 3 hours. Customers in Europe couldn’t access the service, despite “being on AWS.”

Better approach:

Deploy multi-region active-active architectures if uptime is critical.

Example: DynamoDB Global Tables replicate across regions.

API Gateway can route to regional endpoints via Route 53 latency-based routing.

Use services that are inherently global when possible (e.g., CloudFront, S3 with cross-region replication).

Be intentional: resilience is architected, not automatic.

7. When Not to Use Serverless

APIs requiring ultra-low latency (<10ms, e.g. gaming or trading).

Constant, heavy workloads (ECS/EKS with reserved instances can be cheaper).

GPU or long-running jobs (>15 min, better on batch/containers).

8. Example: Serverless CRUD API with AWS SAM

Here’s a simple CRUD API example with API Gateway + Lambda + DynamoDB using AWS SAM:

AWSTemplateFormatVersion: '2010-09-09'

Transform: AWS::Serverless-2016-10-31

Resources:

MyTable:

Type: AWS::DynamoDB::Table

Properties:

TableName: Items

BillingMode: PAY_PER_REQUEST

AttributeDefinitions:

- AttributeName: id

AttributeType: S

KeySchema:

- AttributeName: id

KeyType: HASH

MyFunction:

Type: AWS::Serverless::Function

Properties:

CodeUri: src/

Handler: app.lambda_handler

Runtime: python3.9

Events:

Api:

Type: Api

Properties:

Path: /items

Method: post

Environment:

Variables:

TABLE_NAME: !Ref MyTable

And a minimal Python Lambda handler:

import json

import boto3

import os

import uuid

dynamodb = boto3.resource("dynamodb")

table = dynamodb.Table(os.environ["TABLE_NAME"])

def lambda_handler(event, context):

item = {

"id": str(uuid.uuid4()),

"payload": json.loads(event["body"])

}

table.put_item(Item=item)

return {

"statusCode": 200,

"body": json.dumps(item)

}

This simple example shows the essence of serverless: event in → function → persistent store → response.

Conclusion

Serverless on AWS isn’t about “removing servers.” It’s about removing ownership of infrastructure—while taking on a new set of architectural responsibilities.

When used well, serverless gives you:

Elasticity without ops overhead

Faster delivery cycles

Costs aligned with actual usage

When used poorly, it leads to:

Unpredictable bills

Spaghetti architectures

Debugging nightmares

👉 The real skill isn’t just “writing Lambda functions.”

It’s designing event-driven systems that are resilient, observable, and cost-aware.